What's the best way to implement Fan-out/Fan-in serverlessly in 2024?

|

Back in 2018, I shared [1] several ways to implement fan-out/fan-in with Lambda. A lot has changed since, so let’s explore the solution space in 2024. Remember, what’s “best” depends on your context. I will do my best to outline the trade-offs you should consider. Also, I will only consider AWS services in this post. But there is a wealth of OpenSource/3rd-party services that you can use too, such as Restate. If you’re not sure whether you need fan-out/fan-in or map-reduce, then you should my previous post first [2]. I explained the difference between the two and when to use which. Ok, let’s go! Step FunctionsFan-out has always been easy on AWS. You can use SQS to distribute tasks across many workers for parallel processing. But fan-in makes you work! You need to keep track of the individual results so you can act on them when all the results are in. Luckily for us, Step Function has productized the fan-out/fan-in pattern with its You provide an input array and specify how to process each item in the array. The

You can take this output array as input to another Importantly, the

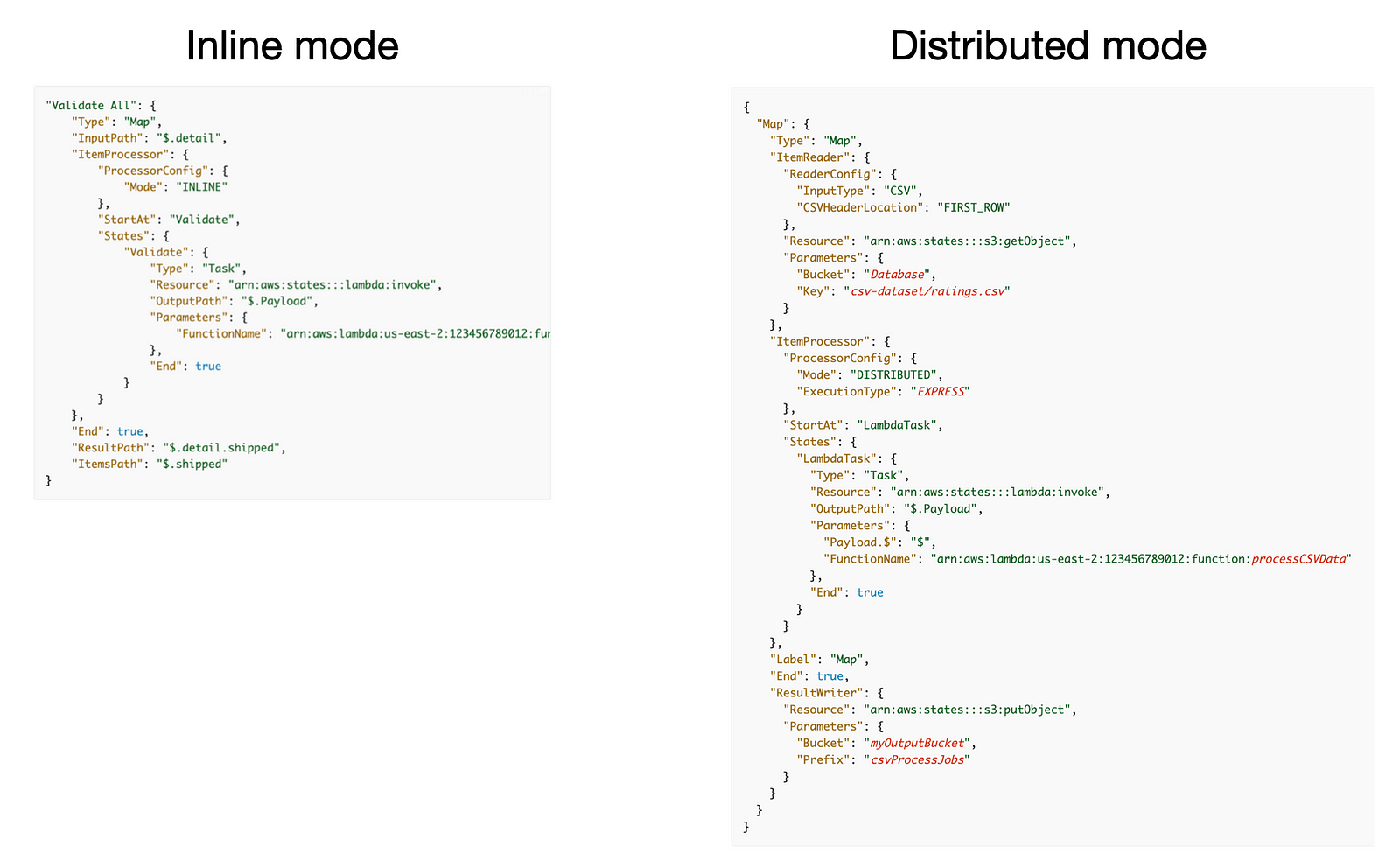

With these two modes, you can use the The main consideration here is the ease of configuration and cost. Ease of configuration

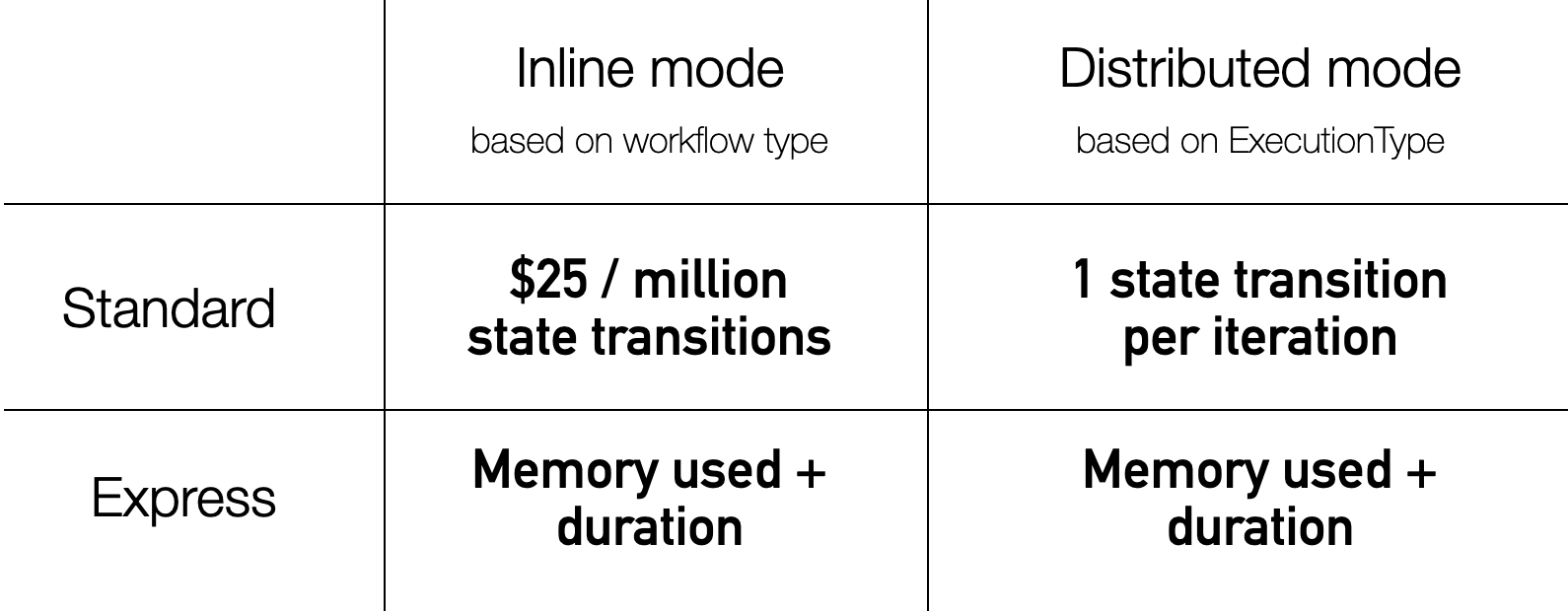

The

In addition, you have to decide what This is an important decision. Each iteration of the map state is executed as a child workflow. The Standard workflows can run for up to a year. But an express workflow can only run for five minutes. See this article [3] for a more detailed comparison of these two workflow types. Perhaps most importantly, this decision affects how the iterations are charged. CostIn The Express Workflows are priced exactly as before. However, Standard Workflows are priced as one state transition per iteration. That is, even if an iteration executes 10 state transitions, they are priced as one state transition.

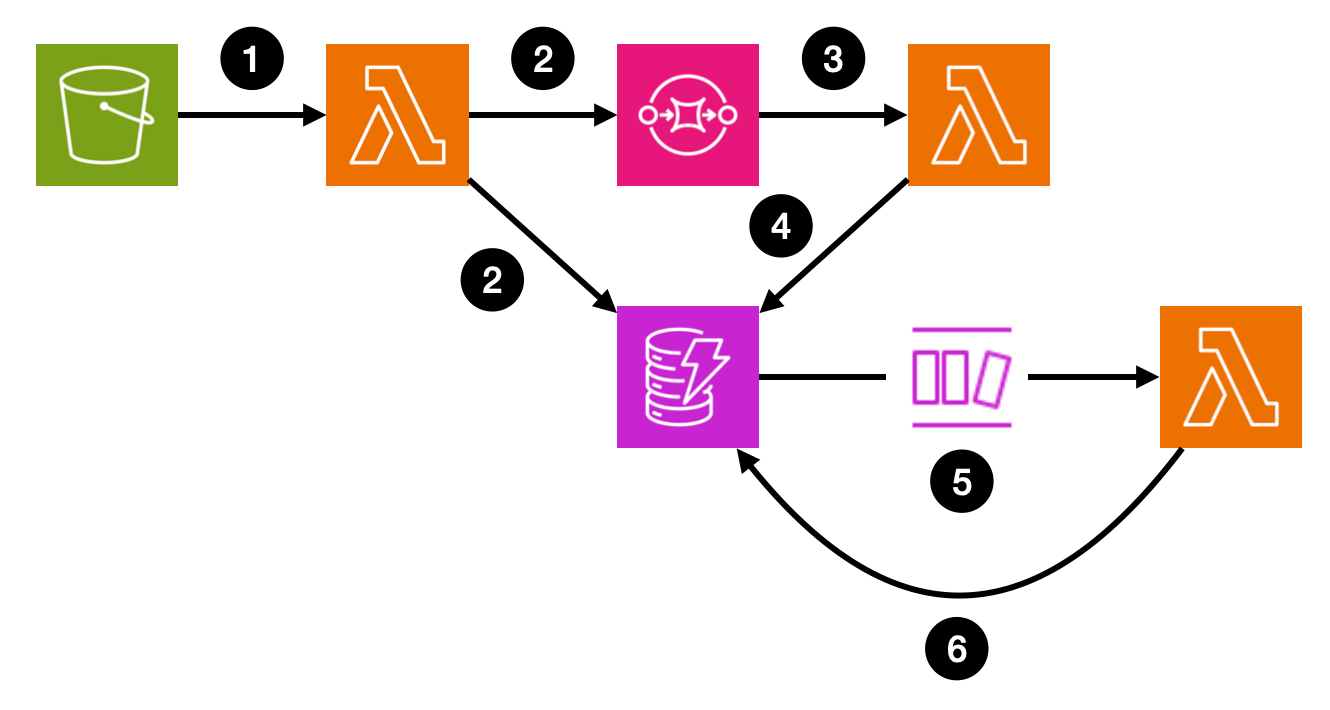

With this in mind, the Step Functions cost for processing large input arrays will not be astronomical. However, you also need to take into account other associated costs, such as the cost of Lambda invocations. If you need to process a large array of inputs, consider processing them in batches. SummaryStep Function’s It can handle workloads at any scale. Even if you need to fan out to millions of tasks, the distributed map state can offer a cost-effective solution. If you want a balanced solution that offers good developer productivity and cost efficiency, you should choose Step Functions. But if cost is your primary concern, maybe because you need to produce millions of tasks frequently, then you should implement a custom solution. Custom build solutionsHere’s a common pattern for building a custom fan-out/fan-in solution:

This is likely a more cost-efficient solution than Step Functions. There are built-in batching for the SQS and DynamoDB stream functions, courtesy of Lambda’s EventSourceMapping. So the Lambda-related processing costs will be lower. Furthermore, here is a rough estimate for other costs (assuming one million items):

As you can see, these estimated costs are much lower than those for Step Functions. However, this solution has many moving parts, and you have to own the uptime. If you frequently process millions of items then a custom solution like this can make sense. SummaryWhen choosing a solution, it’s not just about finding the cheapest option. It’s about getting the most value for your investment. Remember, you get what you paid for. Think of it like buying tools for a job. You don’t always buy the cheapest tools because they might not last. But you also don’t need the most expensive ones if they offer more than you need. You aim for the right balance — reliable enough to get the job done efficiently without overspending. In the same way, when building your serverless architecture, choose the solution that offers the best trade-off between cost, complexity, and capability for your specific needs. Links[1] How to do fan-out and fan-in with AWS Lambda [2] Do you know your Fan-Out/Fan-In from Map-Reduce? |

Master Serverless

Join 17K readers and level up you AWS game with just 5 mins a week.

If you use Claude Code a lot, you’ve probably run into usage limits, sometimes even in short coding sessions. But cost isn’t the only problem. In long-running sessions, the context window eventually fills up, and that can cause the agent to forget earlier decisions, lose important details, or come back from compaction with gaps in its working memory. Here are three tools worth checking out if you want to reduce token usage and make longer coding sessions possible. 1. CavemanThis is a Claude...

AI agents can now scan an entire open-source codebase for exploitable vulnerabilities in hours. Frontier models carry the complete library of known bug classes in their weights. So you can simply point an AI agent at a codebase and tell it to find zero-days. This isn't theoretical. Willy Tarreau, the HAProxy lead developer, reports that security bug reports have jumped from 2–3 per week to 5–10 per day. Greg Kroah-Hartman, the Linux kernel maintainer, described what happened: "Months ago, we...

Lambda Durable Functions makes it easy to implement business workflows using plain Lambda functions. Besides the intended use cases, they also let us implement ETL jobs without needing recursions or Step Functions. Many long-running ETL jobs have a time-consuming, sequential steps that cannot be easily parallelised. For example: Fetching data from shared databases/APIs with throughput limits. When data needs to be processed sequentially. Historically, Lambda was not a good fit for these...